AI-Supervised Learning: Difference between revisions

No edit summary |

No edit summary |

||

| Line 1: | Line 1: | ||

= Supervised Learning = | = Supervised Learning = | ||

* Be able to correctly identify supervised learning problems. | * Be able to correctly identify supervised learning problems. | ||

* | * Have learned how to formulate supervised learning problems. | ||

* Understand what inputs and outputs | * Understand what inputs and outputs would be passed to the learning algorithms. | ||

* | * Be able to identify how the performance of such algorithms can be measured, and pick suitable metrics. | ||

* Understand the theoretical limitations of supervised learning. | * Understand the theoretical limitations of supervised learning. | ||

== Introduction == | == Introduction == | ||

Computer programs are written to precise rules, but we live in a world where the rules are unclear, changing, or noisy. In many cases, seeing | Computer programs are written to precise rules, but we live in a world where the rules are unclear, not always known, changing, or noisy. In many cases, seeing an image or learning by example makes learning easier or more successful. | ||

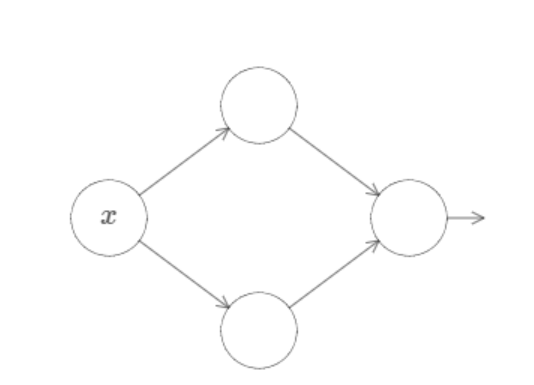

Supervised learning | Supervised learning is learning by example. SL is given input-output pairs (e.g., examples) in which it tries function mapping inputs to outputs. | ||

* | * Example: Cat or dog. Input would be photos, and the outputs: labels (i.e., the right answer, cat or dog). | ||

* | * Other examples: Fraudulent bank transactions or speech recognition. | ||

== Mathematical Formulation == | == Mathematical Formulation == | ||

Formally, the inputs to the algorithm are denoted by \( x \) and the corresponding outputs as \( y \). Note, \( x \) and \( y \) are usually high-dimensional vectors or matrices. We also use \( X \) and \( Y \) as the sets of all possible inputs and outputs, respectively. Provided examples, \( D \), can now be expressed as a set: | |||

\[ D = \{(x_1, y_1), (x_2, y_2), \dots, (x_n, y_n)\} \] | \[ D = \{(x_1, y_1), (x_2, y_2), \dots, (x_n, y_n)\} \] | ||

=== Classification and Regression === | === Classification and Regression === | ||

The set of possible outputs, \( Y \), can be finite or infinite. | |||

* ** | * When \( Y \) is finite and preferably small, we say that it is a classification problem. Predicting what animal appears in the picture is an example of classification. | ||

* Alternatively, we might want to predict a number, or a real vector, in which case it is a regression problem. For example, predicting stock prices (\( y \)) given the time of day (\( x \)). | |||

The line between classification and regression can get a little blurry, with the same problem being represented in different ways. Algorithms for classification often predict the probability for each class present in \( Y \), and since the probability is a real number, it could be considered a regression problem. Output probabilities can also be used as a measure of confidence, with high probability indicating that the algorithm is certain about its prediction. | |||

=== Reformulating Problems === | |||

* **Classification as Regression:** We can separate data points with a line. While regression is primarily used to predict \( y \) given \( x \), the solution to a regression can be thought of as a continuous line or plane. If this is the case, one might consider that this line or plane can be used to separate different classes of data points. | |||

* **Regression as Classification:** Consider predicting rent prices, where the prices vary from £500-£5000. The prices can be discretized (i.e., the values made discrete as opposed to continuous) into non-overlapping buckets. For instance, £100 can be a separate bucket. The question to consider is how small a bucket should be. | |||

== Example Application: Self-Driving Cars == | |||

The problem of engineering a self-driving car can be addressed using supervised learning by training models to mimic the decisions that human drivers make under various conditions. | |||

=== Process === | |||

1. **Data Collection:** | |||

* Gather data from human-driven cars equipped with cameras, LIDAR, radar, GPS, and other sensors. This data could include videos and images of the road, sensor readings, and information about the car's actions (e.g., steering angles, acceleration, braking). | |||

* The dataset should cover a variety of driving conditions like different weather (rain, fog, snow), traffic conditions (highway, city driving), and environments (urban areas, rural roads). | |||

2. **Labeling the Data:** | |||

* The data is labeled with the actions the human driver took in each scenario. For example, each frame of video or sensor data might be paired with the steering angle, speed, or whether the car should brake or accelerate. | |||

* For instance, if an image shows a red light ahead, the corresponding label could be to "brake." If the car needs to navigate around a parked vehicle, the label might specify a steering adjustment. | |||

3. **Feature Extraction:** | |||

* Process the input data to extract useful features that help the model learn patterns. These features might include road lane markings, traffic signs, the position of other vehicles, pedestrians, and the distance between the self-driving car and other objects. | |||

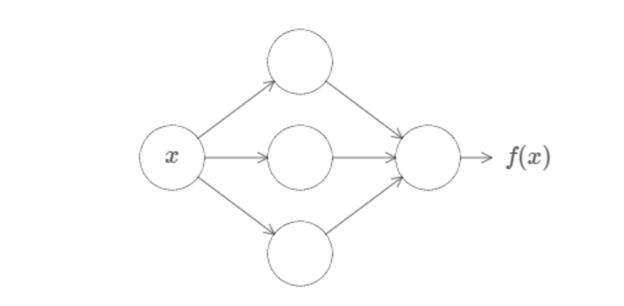

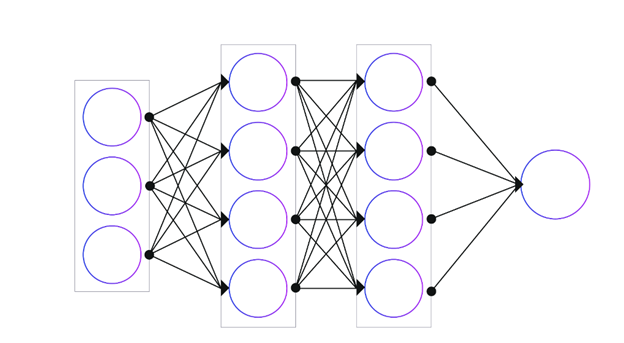

* In many modern approaches, deep learning techniques like convolutional neural networks (CNNs) are used to automatically extract these features from raw sensor data (e.g., camera images). | |||

4. **Model Training:** | |||

* Use the labeled data to train a supervised learning model, such as a neural network. The goal of the model is to learn a mapping from input features (e.g., images, sensor readings) to output labels (e.g., steering angle, acceleration). | |||

* The model adjusts its parameters to minimize the difference between its predictions (how it would drive) and the actions taken by the human driver in the training data. | |||

5. **Model Evaluation:** | |||

* Evaluate the model on a separate test set to ensure it generalizes well to new driving scenarios it hasn’t seen before. | |||

* For example, the test set could include driving situations like new road types or lighting conditions to verify that the model’s predictions align with what a human driver would do. | |||

6. **Deployment and Feedback:** | |||

* Once the model is performing well, it can be deployed in a self-driving car for real-world testing. | |||

* As the car drives, it can continue to collect data on new scenarios, and this data can be used to further fine-tune or retrain the model, continuously improving its decision-making. | |||

By using supervised learning, the self-driving car learns to approximate the behavior of a human driver, making decisions based on patterns observed in the labeled training data. This approach is effective for handling a wide range of driving scenarios and helps the car to navigate in complex environments. | |||

== Objectives in Supervised Learning == | |||

Ideally, the learning algorithm will learn to make predictions close to true values. But it can be hard to define what exactly we mean by "close" in some contexts. Defining a measure of similarity is an important part of the problem specification, as it usually determines how we will be optimizing and judging the approximation. | |||

If you can define "closeness," then you can usually define a loss function. The loss function is the objective being optimized when training. | |||

== Metrics for Regression Problems == | |||

For regression problems, i.e., those that predict a number or a vector, the usual metrics include: | |||

1. **Mean Absolute Error (MAE):** | |||

\[ | |||

\text{MAE} = \frac{1}{n} \sum_{i=1}^{n} |y_i - \hat{y}_i| | |||

\] | |||

2. **Mean Squared Error (MSE):** | |||

\[ | |||

\text{MSE} = \frac{1}{n} \sum_{i=1}^{n} (y_i - \hat{y}_i)^2 | |||

\] | |||

MSE is often used in optimization due to its nice mathematical properties (it can be differentiated). | |||

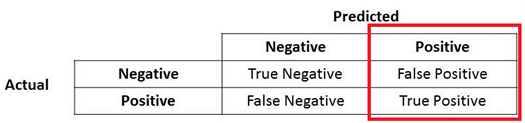

== Metrics for Classification Problems == | |||

Classification is a bit trickier, as we predict some fixed categories. An obvious metric is accuracy: | |||

\[ | |||

\text{Accuracy} = \frac{\text{True Positives} + \text{True Negatives}}{\text{Total Predictions}} | |||

\] | |||

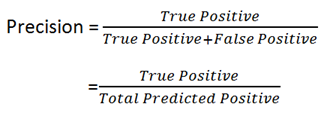

=== Precision and Recall === | |||

* **Precision:** Out of all the examples predicted as positive, how many were actually positive? | |||

\[ | |||

\text{Precision} = \frac{\text{True Positives}}{\text{True Positives} + \text{False Positives}} | |||

\] | |||

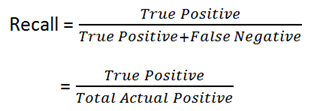

* **Recall:** Out of all actual positive examples, how many were correctly predicted as positive? | |||

\[ | |||

\text{Recall} = \frac{\text{True Positives}}{\text{True Positives} + \text{False Negatives}} | |||

\] | |||

=== F1 Score === | |||

When there is a need to balance precision and recall, F1 Score is used: | |||

\[ | |||

\text{F1 Score} = 2 \cdot \frac{\text{Precision} \cdot \text{Recall}}{\text{Precision} + \text{Recall}} | |||

\] | |||

== Generalization and Overfitting == | |||

Having defined the problem, we now focus on generalization. Overfitting happens when a model performs exceptionally well on training data but poorly on unseen test data. The key is to ensure the model generalizes beyond the training examples. | |||

== Real-World Applications == | |||

1. **Predicting Commute Times:** | |||

* **Inputs:** Start time, day of the week, weather conditions, traffic data, distance, route taken, special events, road incidents. | |||

* **Outputs:** Predicted commute time. | |||

* **Use Cases:** Navigation apps, daily commuters, transportation authorities. | |||

2. **Other Applications:** Self-driving cars, fraud detection, medical diagnoses, etc. | |||

== Precision and Recall == | |||

The formula for calculating Precision and Recall is as follows: | |||

=== Precision === | |||

Let us look at Precision first. | |||

The denominator is actually the **Total Predicted Positive**. So the formula becomes: | |||

\[ | |||

\text{True Positive} + \text{False Positive} = \text{Total Predicted Positive} | |||

\] | |||

Immediately, you can see that Precision talks about how precise or accurate your model is out of those predicted positive, how many of them are actually positive. | |||

Precision is a good measure to determine performance when the cost of False Positives is high. For instance, in email spam detection, a False Positive means that an email that is non-spam (actual negative) has been identified as spam (predicted spam). The email user might lose important emails if the precision is not high for the spam detection model. | |||

=== Recall === | |||

Now let us apply the same logic to Recall. Recall is calculated as: | |||

\[ | |||

\text{True Positive} + \text{False Negative} = \text{Actual Positive} | |||

\] | |||

Recall actually calculates how many of the Actual Positives our model captures through labeling it as Positive (True Positive). Applying the same understanding, we know that Recall should be the model metric we use to select our best model when there is a high cost associated with False Negatives. | |||

For instance: | |||

* **Fraud Detection:** If a fraudulent transaction (Actual Positive) is predicted as non-fraudulent (Predicted Negative), the consequence can be very bad for the bank. | |||

* **Sick Patient Detection:** If a sick patient (Actual Positive) goes through the test and is predicted as not sick (Predicted Negative), the cost associated with False Negatives will be extremely high, especially if the sickness is contagious. | |||

=== F1 Score === | |||

Now if you read a lot of other literature on Precision and Recall, you cannot avoid the other measure, **F1 Score**, which is a function of Precision and Recall. The formula is as follows: | |||

\[ | |||

F1 = 2 \cdot \frac{\text{Precision} \cdot \text{Recall}}{\text{Precision} + \text{Recall}} | |||

\] | |||

F1 Score is needed when you want to seek a balance between Precision and Recall. | |||

**Comparison with Accuracy:** Accuracy can be largely influenced by a large number of True Negatives, which in most business circumstances, are not the focus. False Negatives and False Positives usually have business costs (tangible and intangible), so F1 Score might be a better measure when there is an uneven class distribution (a large number of Actual Negatives). | |||

=== Example === | |||

Consider the following classifiers: | |||

**Classifier 1:** | |||

* True Negatives: 107 | |||

* False Positives: 36 | |||

* False Negatives: 12 | |||

* True Positives: 45 | |||

**Classifier 2:** | |||

* True Negatives: 116 | |||

* False Positives: 4 | |||

* False Negatives: 30 | |||

* True Positives: 50 | |||

==== Part (a): Accuracy of Each Classifier ==== | |||

Accuracy is defined as the ratio of the number of correct predictions (both True Positives and True Negatives) to the total number of predictions: | |||

\[ | |||

\text{Accuracy} = \frac{\text{True Positives} + \text{True Negatives}}{\text{Total Predictions}} | |||

\] | |||

For Classifier 1: | |||

\[ | |||

\text{Accuracy}_1 = \frac{107 + 45}{107 + 36 + 12 + 45} = \frac{152}{200} = 0.76 \quad (76\% \text{ accuracy}) | |||

\] | |||

=== | For Classifier 2: | ||

\[ | |||

\text{Accuracy}_2 = \frac{116 + 50}{116 + 4 + 30 + 50} = \frac{166}{200} = 0.83 \quad (83\% \text{ accuracy}) | |||

\] | |||

== | ==== Part (b): Argument for Preferring Classifier 1 ==== | ||

While Classifier 2 has a higher overall accuracy (83% compared to 76%), Classifier 1 might be preferable in situations where the Recall (sensitivity) is more important than accuracy. | |||

2 | |||

**Recall Formula:** | |||

\[ | |||

* | \text{Recall} = \frac{\text{True Positives}}{\text{True Positives} + \text{False Negatives}} | ||

* | \] | ||

* | |||

* | |||

**Recall for Classifier 1:** | |||

\[ | |||

\text{Recall}_1 = \frac{45}{45 + 12} = \frac{45}{57} \approx 0.79 \quad (79\% \text{ recall}) | |||

\] | |||

**Recall for Classifier 2:** | |||

\[ | |||

* * | \text{Recall}_2 = \frac{50}{50 + 30} = \frac{50}{80} = 0.625 \quad (62.5\% \text{ recall}) | ||

\] | |||

**Reasons to Prefer Classifier 1:** | |||

* * | 1. **Higher Recall:** Classifier 1 has a higher Recall (79%) compared to Classifier 2 (62.5%). This means that Classifier 1 is better at identifying True Positive cases—more patients who actually have cancer are correctly identified. | ||

* * | 2. **Importance of Recall in Medical Diagnoses:** In the context of detecting a serious condition like cancer, False Negatives (failing to identify a cancer case) can be far more dangerous than False Positives (mistakenly predicting cancer when there is none). Missing a positive case could delay critical treatment. Thus, even though Classifier 2 has better accuracy, Classifier 1 might be preferred because it minimizes the number of missed cancer diagnoses. | ||

In summary, while Classifier 2 is more accurate overall, Classifier 1 has a better balance between catching True Positive cases and avoiding False Negatives, making it more suitable when it is crucial not to miss positive cases. | |||

== References == | == References == | ||

Latest revision as of 16:36, 4 January 2025

Supervised Learning

- Be able to correctly identify supervised learning problems.

- Have learned how to formulate supervised learning problems.

- Understand what inputs and outputs would be passed to the learning algorithms.

- Be able to identify how the performance of such algorithms can be measured, and pick suitable metrics.

- Understand the theoretical limitations of supervised learning.

Introduction

Computer programs are written to precise rules, but we live in a world where the rules are unclear, not always known, changing, or noisy. In many cases, seeing an image or learning by example makes learning easier or more successful.

Supervised learning is learning by example. SL is given input-output pairs (e.g., examples) in which it tries function mapping inputs to outputs.

- Example: Cat or dog. Input would be photos, and the outputs: labels (i.e., the right answer, cat or dog).

- Other examples: Fraudulent bank transactions or speech recognition.

Mathematical Formulation

Formally, the inputs to the algorithm are denoted by \( x \) and the corresponding outputs as \( y \). Note, \( x \) and \( y \) are usually high-dimensional vectors or matrices. We also use \( X \) and \( Y \) as the sets of all possible inputs and outputs, respectively. Provided examples, \( D \), can now be expressed as a set:

\[ D = \{(x_1, y_1), (x_2, y_2), \dots, (x_n, y_n)\} \]

Classification and Regression

The set of possible outputs, \( Y \), can be finite or infinite.

- When \( Y \) is finite and preferably small, we say that it is a classification problem. Predicting what animal appears in the picture is an example of classification.

- Alternatively, we might want to predict a number, or a real vector, in which case it is a regression problem. For example, predicting stock prices (\( y \)) given the time of day (\( x \)).

The line between classification and regression can get a little blurry, with the same problem being represented in different ways. Algorithms for classification often predict the probability for each class present in \( Y \), and since the probability is a real number, it could be considered a regression problem. Output probabilities can also be used as a measure of confidence, with high probability indicating that the algorithm is certain about its prediction.

Reformulating Problems

- **Classification as Regression:** We can separate data points with a line. While regression is primarily used to predict \( y \) given \( x \), the solution to a regression can be thought of as a continuous line or plane. If this is the case, one might consider that this line or plane can be used to separate different classes of data points.

- **Regression as Classification:** Consider predicting rent prices, where the prices vary from £500-£5000. The prices can be discretized (i.e., the values made discrete as opposed to continuous) into non-overlapping buckets. For instance, £100 can be a separate bucket. The question to consider is how small a bucket should be.

Example Application: Self-Driving Cars

The problem of engineering a self-driving car can be addressed using supervised learning by training models to mimic the decisions that human drivers make under various conditions.

Process

1. **Data Collection:**

* Gather data from human-driven cars equipped with cameras, LIDAR, radar, GPS, and other sensors. This data could include videos and images of the road, sensor readings, and information about the car's actions (e.g., steering angles, acceleration, braking). * The dataset should cover a variety of driving conditions like different weather (rain, fog, snow), traffic conditions (highway, city driving), and environments (urban areas, rural roads).

2. **Labeling the Data:**

* The data is labeled with the actions the human driver took in each scenario. For example, each frame of video or sensor data might be paired with the steering angle, speed, or whether the car should brake or accelerate. * For instance, if an image shows a red light ahead, the corresponding label could be to "brake." If the car needs to navigate around a parked vehicle, the label might specify a steering adjustment.

3. **Feature Extraction:**

* Process the input data to extract useful features that help the model learn patterns. These features might include road lane markings, traffic signs, the position of other vehicles, pedestrians, and the distance between the self-driving car and other objects. * In many modern approaches, deep learning techniques like convolutional neural networks (CNNs) are used to automatically extract these features from raw sensor data (e.g., camera images).

4. **Model Training:**

* Use the labeled data to train a supervised learning model, such as a neural network. The goal of the model is to learn a mapping from input features (e.g., images, sensor readings) to output labels (e.g., steering angle, acceleration). * The model adjusts its parameters to minimize the difference between its predictions (how it would drive) and the actions taken by the human driver in the training data.

5. **Model Evaluation:**

* Evaluate the model on a separate test set to ensure it generalizes well to new driving scenarios it hasn’t seen before. * For example, the test set could include driving situations like new road types or lighting conditions to verify that the model’s predictions align with what a human driver would do.

6. **Deployment and Feedback:**

* Once the model is performing well, it can be deployed in a self-driving car for real-world testing. * As the car drives, it can continue to collect data on new scenarios, and this data can be used to further fine-tune or retrain the model, continuously improving its decision-making.

By using supervised learning, the self-driving car learns to approximate the behavior of a human driver, making decisions based on patterns observed in the labeled training data. This approach is effective for handling a wide range of driving scenarios and helps the car to navigate in complex environments.

Objectives in Supervised Learning

Ideally, the learning algorithm will learn to make predictions close to true values. But it can be hard to define what exactly we mean by "close" in some contexts. Defining a measure of similarity is an important part of the problem specification, as it usually determines how we will be optimizing and judging the approximation.

If you can define "closeness," then you can usually define a loss function. The loss function is the objective being optimized when training.

Metrics for Regression Problems

For regression problems, i.e., those that predict a number or a vector, the usual metrics include: 1. **Mean Absolute Error (MAE):** \[ \text{MAE} = \frac{1}{n} \sum_{i=1}^{n} |y_i - \hat{y}_i| \]

2. **Mean Squared Error (MSE):** \[ \text{MSE} = \frac{1}{n} \sum_{i=1}^{n} (y_i - \hat{y}_i)^2 \] MSE is often used in optimization due to its nice mathematical properties (it can be differentiated).

Metrics for Classification Problems

Classification is a bit trickier, as we predict some fixed categories. An obvious metric is accuracy: \[ \text{Accuracy} = \frac{\text{True Positives} + \text{True Negatives}}{\text{Total Predictions}} \]

Precision and Recall

- **Precision:** Out of all the examples predicted as positive, how many were actually positive?

\[ \text{Precision} = \frac{\text{True Positives}}{\text{True Positives} + \text{False Positives}} \]

- **Recall:** Out of all actual positive examples, how many were correctly predicted as positive?

\[ \text{Recall} = \frac{\text{True Positives}}{\text{True Positives} + \text{False Negatives}} \]

F1 Score

When there is a need to balance precision and recall, F1 Score is used: \[ \text{F1 Score} = 2 \cdot \frac{\text{Precision} \cdot \text{Recall}}{\text{Precision} + \text{Recall}} \]

Generalization and Overfitting

Having defined the problem, we now focus on generalization. Overfitting happens when a model performs exceptionally well on training data but poorly on unseen test data. The key is to ensure the model generalizes beyond the training examples.

Real-World Applications

1. **Predicting Commute Times:**

* **Inputs:** Start time, day of the week, weather conditions, traffic data, distance, route taken, special events, road incidents. * **Outputs:** Predicted commute time. * **Use Cases:** Navigation apps, daily commuters, transportation authorities.

2. **Other Applications:** Self-driving cars, fraud detection, medical diagnoses, etc.

Precision and Recall

The formula for calculating Precision and Recall is as follows:

Precision

Let us look at Precision first.

The denominator is actually the **Total Predicted Positive**. So the formula becomes:

\[ \text{True Positive} + \text{False Positive} = \text{Total Predicted Positive} \]

Immediately, you can see that Precision talks about how precise or accurate your model is out of those predicted positive, how many of them are actually positive.

Precision is a good measure to determine performance when the cost of False Positives is high. For instance, in email spam detection, a False Positive means that an email that is non-spam (actual negative) has been identified as spam (predicted spam). The email user might lose important emails if the precision is not high for the spam detection model.

Recall

Now let us apply the same logic to Recall. Recall is calculated as:

\[ \text{True Positive} + \text{False Negative} = \text{Actual Positive} \]

Recall actually calculates how many of the Actual Positives our model captures through labeling it as Positive (True Positive). Applying the same understanding, we know that Recall should be the model metric we use to select our best model when there is a high cost associated with False Negatives.

For instance:

- **Fraud Detection:** If a fraudulent transaction (Actual Positive) is predicted as non-fraudulent (Predicted Negative), the consequence can be very bad for the bank.

- **Sick Patient Detection:** If a sick patient (Actual Positive) goes through the test and is predicted as not sick (Predicted Negative), the cost associated with False Negatives will be extremely high, especially if the sickness is contagious.

F1 Score

Now if you read a lot of other literature on Precision and Recall, you cannot avoid the other measure, **F1 Score**, which is a function of Precision and Recall. The formula is as follows:

\[ F1 = 2 \cdot \frac{\text{Precision} \cdot \text{Recall}}{\text{Precision} + \text{Recall}} \]

F1 Score is needed when you want to seek a balance between Precision and Recall.

- Comparison with Accuracy:** Accuracy can be largely influenced by a large number of True Negatives, which in most business circumstances, are not the focus. False Negatives and False Positives usually have business costs (tangible and intangible), so F1 Score might be a better measure when there is an uneven class distribution (a large number of Actual Negatives).

Example

Consider the following classifiers:

- Classifier 1:**

- True Negatives: 107

- False Positives: 36

- False Negatives: 12

- True Positives: 45

- Classifier 2:**

- True Negatives: 116

- False Positives: 4

- False Negatives: 30

- True Positives: 50

Part (a): Accuracy of Each Classifier

Accuracy is defined as the ratio of the number of correct predictions (both True Positives and True Negatives) to the total number of predictions:

\[ \text{Accuracy} = \frac{\text{True Positives} + \text{True Negatives}}{\text{Total Predictions}} \]

For Classifier 1: \[ \text{Accuracy}_1 = \frac{107 + 45}{107 + 36 + 12 + 45} = \frac{152}{200} = 0.76 \quad (76\% \text{ accuracy}) \]

For Classifier 2: \[ \text{Accuracy}_2 = \frac{116 + 50}{116 + 4 + 30 + 50} = \frac{166}{200} = 0.83 \quad (83\% \text{ accuracy}) \]

Part (b): Argument for Preferring Classifier 1

While Classifier 2 has a higher overall accuracy (83% compared to 76%), Classifier 1 might be preferable in situations where the Recall (sensitivity) is more important than accuracy.

- Recall Formula:**

\[ \text{Recall} = \frac{\text{True Positives}}{\text{True Positives} + \text{False Negatives}} \]

- Recall for Classifier 1:**

\[ \text{Recall}_1 = \frac{45}{45 + 12} = \frac{45}{57} \approx 0.79 \quad (79\% \text{ recall}) \]

- Recall for Classifier 2:**

\[ \text{Recall}_2 = \frac{50}{50 + 30} = \frac{50}{80} = 0.625 \quad (62.5\% \text{ recall}) \]

- Reasons to Prefer Classifier 1:**

1. **Higher Recall:** Classifier 1 has a higher Recall (79%) compared to Classifier 2 (62.5%). This means that Classifier 1 is better at identifying True Positive cases—more patients who actually have cancer are correctly identified. 2. **Importance of Recall in Medical Diagnoses:** In the context of detecting a serious condition like cancer, False Negatives (failing to identify a cancer case) can be far more dangerous than False Positives (mistakenly predicting cancer when there is none). Missing a positive case could delay critical treatment. Thus, even though Classifier 2 has better accuracy, Classifier 1 might be preferred because it minimizes the number of missed cancer diagnoses.

In summary, while Classifier 2 is more accurate overall, Classifier 1 has a better balance between catching True Positive cases and avoiding False Negatives, making it more suitable when it is crucial not to miss positive cases.

References

- [Weierstrass Approximation Theorem](https://en.wikipedia.org/wiki/Weierstrass_approximation_theorem)

- [Neural Networks and Deep Learning](http://neuralnetworksanddeeplearning.com/chap4.html)